Create Apache Kafka® table on Apache Flink® on HDInsight on AKS

Important

This feature is currently in preview. The Supplemental Terms of Use for Microsoft Azure Previews include more legal terms that apply to Azure features that are in beta, in preview, or otherwise not yet released into general availability. For information about this specific preview, see Azure HDInsight on AKS preview information. For questions or feature suggestions, please submit a request on AskHDInsight with the details and follow us for more updates on Azure HDInsight Community.

Using this example, learn how to Create Kafka table on Apache FlinkSQL.

Prerequisites

Kafka SQL connector on Apache Flink

The Kafka connector allows for reading data from and writing data into Kafka topics. For more information, refer Apache Kafka SQL Connector.

Create a Kafka table on Flink SQL

Prepare topic and data on HDInsight Kafka

Prepare messages with weblog.py

import random

import json

import time

from datetime import datetime

user_set = [

'John',

'XiaoMing',

'Mike',

'Tom',

'Machael',

'Zheng Hu',

'Zark',

'Tim',

'Andrew',

'Pick',

'Sean',

'Luke',

'Chunck'

]

web_set = [

'https://google.com',

'https://facebook.com?id=1',

'https://tmall.com',

'https://baidu.com',

'https://taobao.com',

'https://aliyun.com',

'https://apache.com',

'https://flink.apache.com',

'https://hbase.apache.com',

'https://github.com',

'https://gmail.com',

'https://stackoverflow.com',

'https://python.org'

]

def main():

while True:

if random.randrange(10) < 4:

url = random.choice(web_set[:3])

else:

url = random.choice(web_set)

log_entry = {

'userName': random.choice(user_set),

'visitURL': url,

'ts': datetime.now().strftime("%m/%d/%Y %H:%M:%S")

}

print(json.dumps(log_entry))

time.sleep(0.05)

if __name__ == "__main__":

main()

Pipeline to Kafka topic

sshuser@hn0-contsk:~$ python weblog.py | /usr/hdp/current/kafka-broker/bin/kafka-console-producer.sh --bootstrap-server wn0-contsk:9092 --topic click_events

Other commands:

-- create topic

/usr/hdp/current/kafka-broker/bin/kafka-topics.sh --create --replication-factor 2 --partitions 3 --topic click_events --bootstrap-server wn0-contsk:9092

-- delete topic

/usr/hdp/current/kafka-broker/bin/kafka-topics.sh --delete --topic click_events --bootstrap-server wn0-contsk:9092

-- consume topic

sshuser@hn0-contsk:~$ /usr/hdp/current/kafka-broker/bin/kafka-console-consumer.sh --bootstrap-server wn0-contsk:9092 --topic click_events --from-beginning

{"userName": "Luke", "visitURL": "https://flink.apache.com", "ts": "06/26/2023 14:33:43"}

{"userName": "Tom", "visitURL": "https://stackoverflow.com", "ts": "06/26/2023 14:33:43"}

{"userName": "Chunck", "visitURL": "https://google.com", "ts": "06/26/2023 14:33:44"}

{"userName": "Chunck", "visitURL": "https://facebook.com?id=1", "ts": "06/26/2023 14:33:44"}

{"userName": "John", "visitURL": "https://tmall.com", "ts": "06/26/2023 14:33:44"}

{"userName": "Andrew", "visitURL": "https://facebook.com?id=1", "ts": "06/26/2023 14:33:44"}

{"userName": "John", "visitURL": "https://tmall.com", "ts": "06/26/2023 14:33:44"}

{"userName": "Pick", "visitURL": "https://google.com", "ts": "06/26/2023 14:33:44"}

{"userName": "Mike", "visitURL": "https://tmall.com", "ts": "06/26/2023 14:33:44"}

{"userName": "Zheng Hu", "visitURL": "https://tmall.com", "ts": "06/26/2023 14:33:44"}

{"userName": "Luke", "visitURL": "https://facebook.com?id=1", "ts": "06/26/2023 14:33:44"}

{"userName": "John", "visitURL": "https://flink.apache.com", "ts": "06/26/2023 14:33:44"}

Apache Flink SQL client

Detailed instructions are provided on how to use Secure Shell for Flink SQL client.

Download Kafka SQL Connector & Dependencies into SSH

We're using the Kafka 3.2.0 dependencies in the below step, You're required to update the command based on your Kafka version on HDInsight cluster.

wget https://repo1.maven.org/maven2/org/apache/kafka/kafka-clients/3.2.0/kafka-clients-3.2.0.jar

wget https://repo1.maven.org/maven2/org/apache/flink/flink-connector-kafka/1.17.0/flink-connector-kafka-1.17.0.jar

Connect to Apache Flink SQL Client

Let's now connect to the Flink SQL Client with Kafka SQL client jars.

msdata@pod-0 [ /opt/flink-webssh ]$ bin/sql-client.sh -j flink-connector-kafka-1.17.0.jar -j kafka-clients-3.2.0.jar

Create Kafka table on Apache Flink SQL

Let's create the Kafka table on Flink SQL, and select the Kafka table on Flink SQL.

You're required to update your Kafka bootstrap server IPs in the below snippet.

CREATE TABLE KafkaTable (

`userName` STRING,

`visitURL` STRING,

`ts` TIMESTAMP(3) METADATA FROM 'timestamp'

) WITH (

'connector' = 'kafka',

'topic' = 'click_events',

'properties.bootstrap.servers' = '<update-kafka-bootstrapserver-ip>:9092,<update-kafka-bootstrapserver-ip>:9092,<update-kafka-bootstrapserver-ip>:9092',

'properties.group.id' = 'my_group',

'scan.startup.mode' = 'earliest-offset',

'format' = 'json'

);

select * from KafkaTable;

Produce Kafka messages

Let's now produce Kafka messages to the same topic, using HDInsight Kafka.

python weblog.py | /usr/hdp/current/kafka-broker/bin/kafka-console-producer.sh --bootstrap-server wn0-contsk:9092 --topic click_events

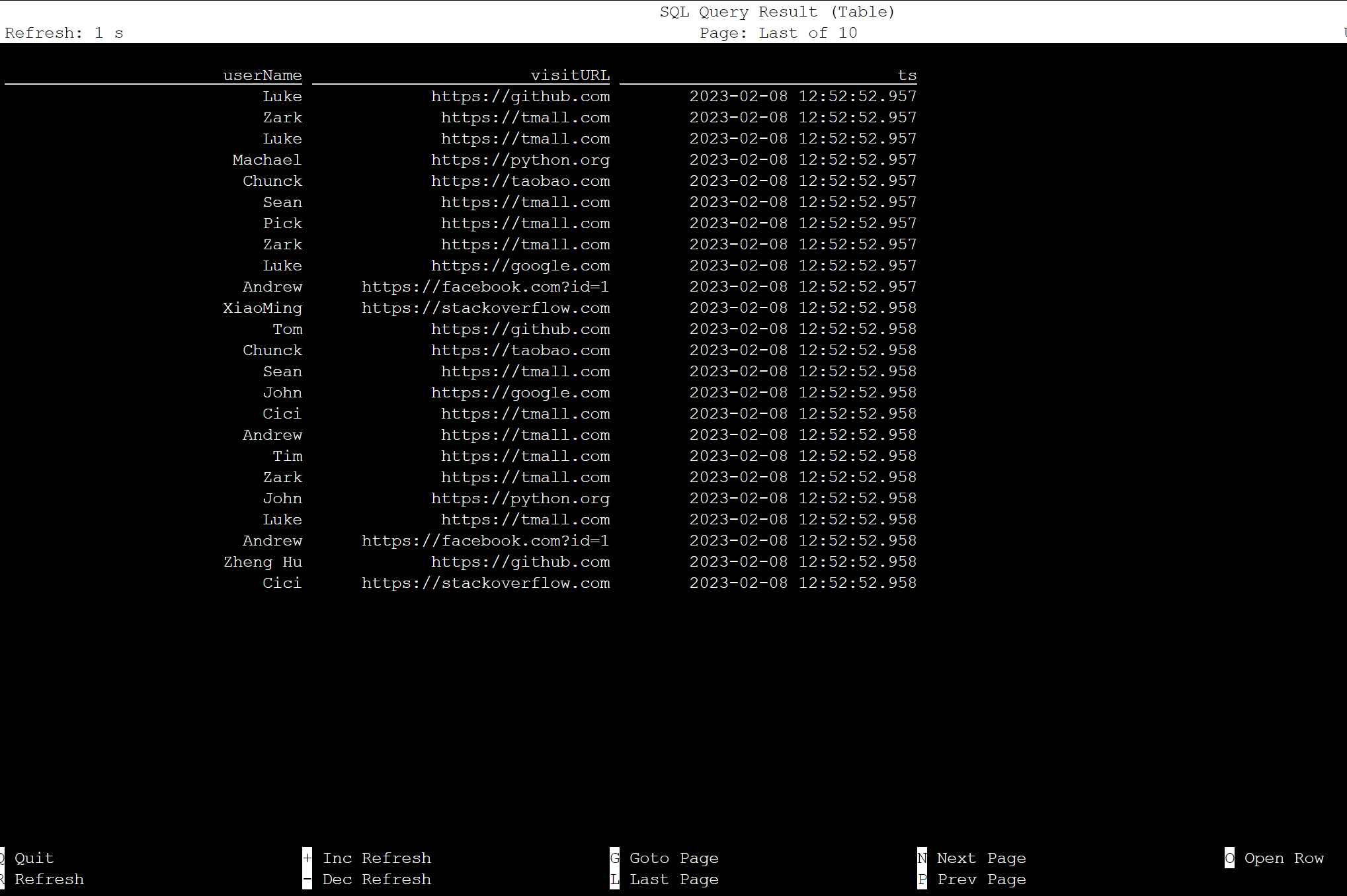

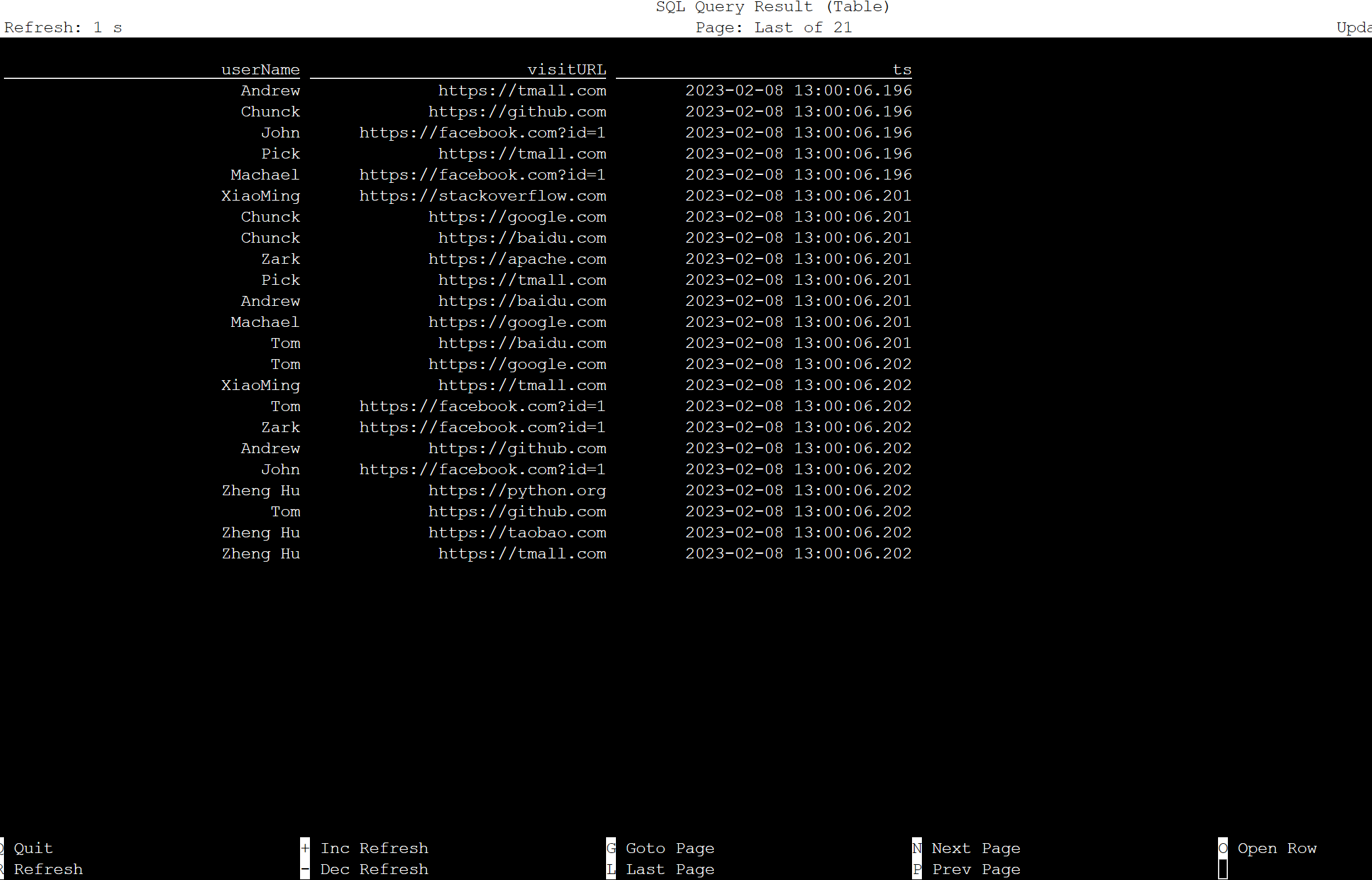

Table on Apache Flink SQL

You can monitor the table on Flink SQL.

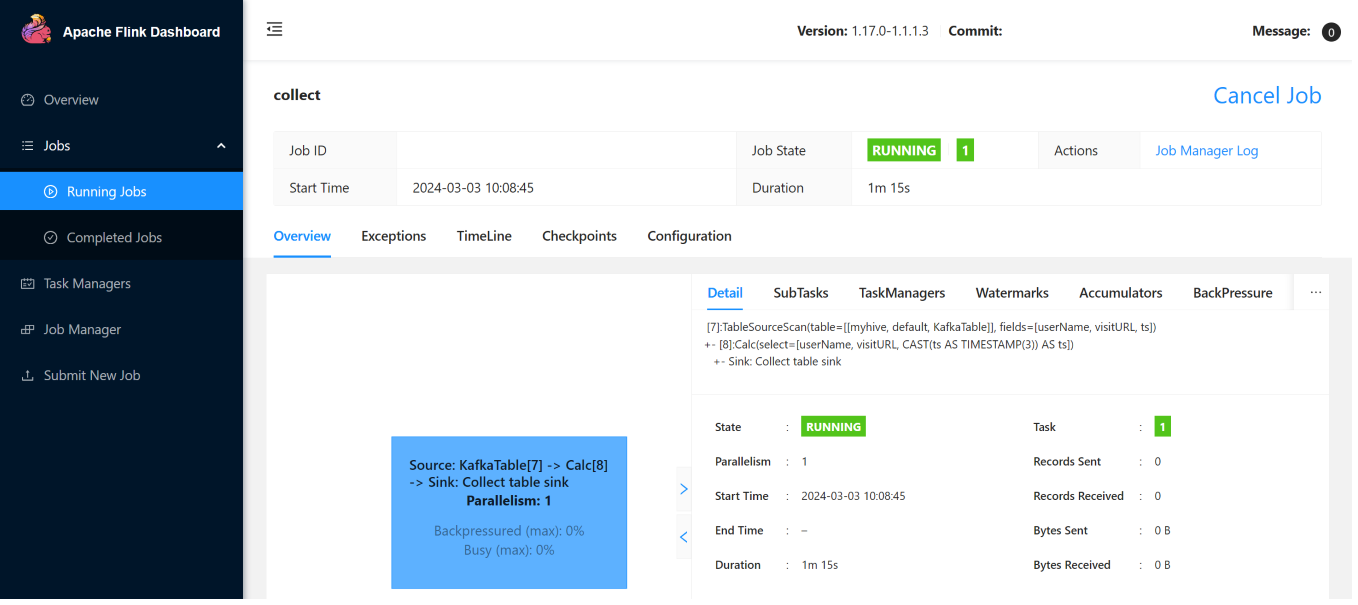

Here are the streaming jobs on Flink Web UI.

Reference

- Apache Kafka SQL Connector

- Apache, Apache Kafka, Kafka, Apache Flink, Flink, and associated open source project names are trademarks of the Apache Software Foundation (ASF).

Feedback

Coming soon: Throughout 2024 we will be phasing out GitHub Issues as the feedback mechanism for content and replacing it with a new feedback system. For more information see: https://aka.ms/ContentUserFeedback.

Submit and view feedback for